Test Analytics for Continuous Integration

We are introducing our second version of Test Analytics - Testspace Insights. This article provides an overview of the feature set and provides a brief description of other important metrics being considered for future releases.

What is the Purpose of Test Analytics?

Used to optimize the change-build-test workflow. When complex software is being built, with large and distributed teams, time is one of the most challenging items to manage. The efficiency of this workflow directly impacts the overall time required to release software – aka Release Management. Release Management is the process of managing, planning, scheduling, and controlling a software build through different stages; including testing and deploying.

Insights facilitate the improvement of the change-build-test workflow.

So, what are some of the time-sinks of the change-build-test workflow?

- Insufficient test coverage: The automated tests are poorly designed and don’t identify enough side-effects from source code changes. Thus, defects escape into the next phase.

- Poor test failure management: Ignoring test failures while the software continues to change. Tracking and responding to test failures using console output, email, and other archaic techniques. Requiring a heavy and time-consuming bug tracking system to manage resolving failures.

- Unstable results: Weak test automation, random test failures, and flaky tests all combined can generate noise, resulting in time-wasting activities.

- Lack of transparency: What is the current status? Are we progressing? Are members communicating? Meetings, emails, and handcrafted reports are useful, but visibility solely based on these traditional methods, versus mined data, can inhibit quick and timely informed decision making.

Using Insights generated from the change-build-test workflow can significantly improve team member engagement and better enable driving operational effectiveness for releasing software.

Connects to Existing Automation

Testspace connects to your change-build-test workflow using a simple command line utility. The utility is used to push content from your test automation system to the Testspace server. Content such as build status, test results, code coverage, source code changes, etc., are automatically collected, stored, analyzed, and then published with the current status. Computational analysis is continuously performed on the historical data using regression patterns, test effectiveness calculations, trends in coverage, and rates for resolving failures; all used together to generate Insights.

How to use the Indicators

The Indicators are calculated based on historical data collected for the selected period. The 3 Indicators focus on different areas, all related to providing more visibility into the efficiency of the change-build-test workflow. These indicators all work in concert, using mined data for continuous assessments.

The core tenets of an efficient workflow are:

- stable and consistent test results

- automated tests that are capturing side-effects (i.e. failing tests) from source code changes

- resolution of test failures at a reasonable rate

Efficient workflows generate healthy regressions, capture side-effects, and resolve these failures at a rapid rate.

In addition to the Indicators, other important metrics are also provided showing system instability, high-frequency failures (i.e. team ignoring the failures), and trends in coverage.

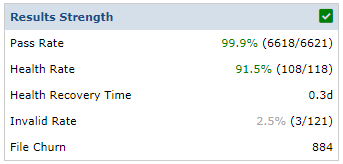

Results Strength

The Results Strength indicator is used to provide a macro view of the consistency of results being generated by your test automation. The very first step in optimizing the workflow is stabling automated test results. Without a reasonable level of consistency from testing, it is nearly impossible to make process improvements.

Improvement requires consistent test results.

A combination of the overall Passing average and the Healthy Results average are used. Healthy Results are the percentage of passing tests determined to be “reasonable” based on the current stage of development. The default is 100%, but this can be defined by the team. By tracking both the average Pass rate and Health rate, the Results Strength indicator provides insight into the collective strength of the software under test for the selected time period.

Also factored in are results tagged as Invalid. This is based on significant variations from previous results such as drops in case count.

For more information regarding Results Strength refer here.

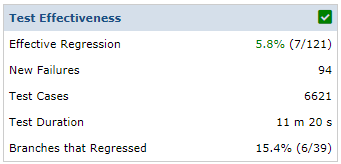

Test Effectiveness

The Test Effectiveness indicator is used for assessing how good the automated tests are at capturing side-effects, based on source code changes – i.e. generating new failures. These new failures have had a minimum of 5 non-failing test statuses. In general, new failures should be the result of source code changes – otherwise, instability of the application/infrastructure causes additional quality risk (i.e. random factors). If the source code is changing at a rapid rate, with no regressions, there may be a problem with the usefulness of the existing tests.

Reasonable test regressions are considered healthy and beneficial to the workflow.

For more information regarding Test Effectiveness refer here.

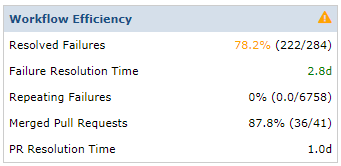

Workflow Efficiency

The Workflow Efficiency indicator is used to provide a macro view of the efficiency of resolving test case failures. Lots of

regressions can indicate that the tests are effective, but even with good test coverage you still want rapid recovery. Referring to

Martin Flower in his popular Continuous Integration article, failures should be addressed immediately. New test failures that are not addressed, in a reasonable timeframe, are considered “drifts” and add to the quality debt! By tracking the overall Failures Resolved % and the Average Resolution Time, teams can assess if their failure resolution process is acceptable.

Letting test failures drift, while source file changes continue, adds considerable effort to triage activity.

For more information regarding Workflow Efficiency refer here.

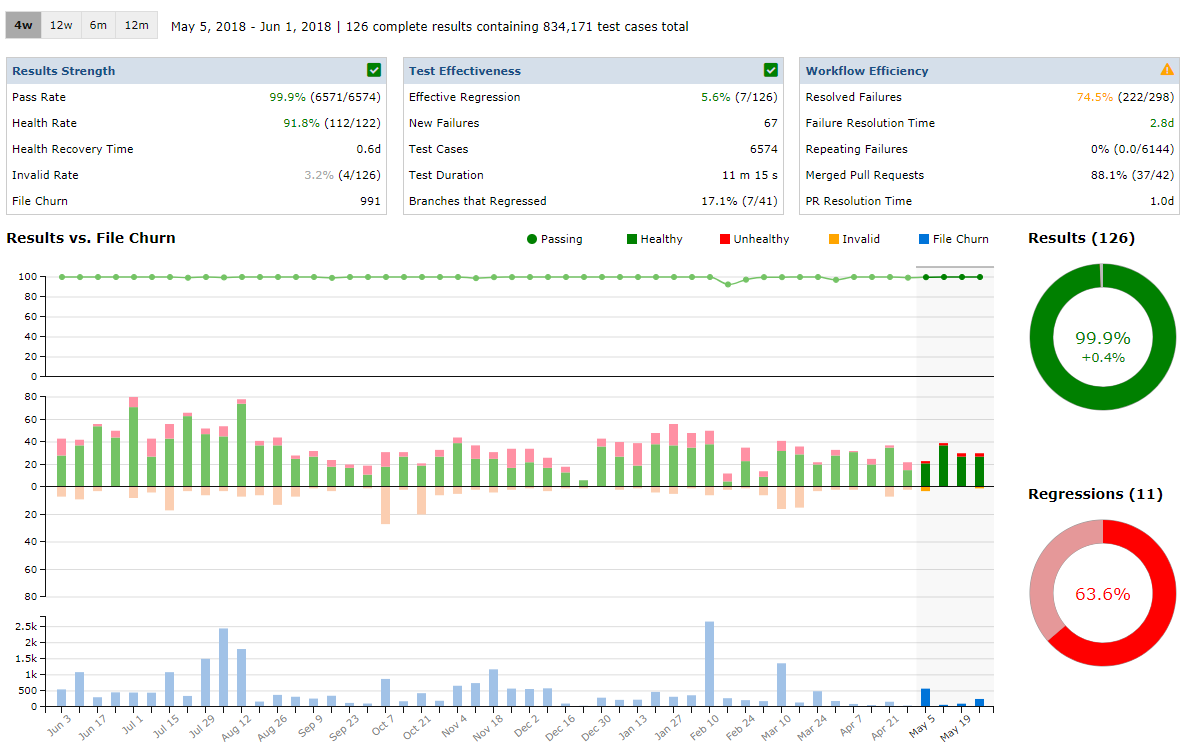

Our Data

We measure and track our Testspace development, and you can see it HERE.

The Testspace server is developed using Ruby on Rails, the source code is hosted using GitHub, and we use Circle CI for test automation.

For more information regarding Insights refer to our help documentation here.

Our Model is constantly improving

We are constantly monitoring and improving our algorithms and statistical models to extract more useful and actionable data that customers can use to improve their software development process. We track regression patterns, resolution rates for failures, risk related to change, and trends in code coverage. To continue improving Testspace Insights, we will continue to collect more data such as:

- Code Review cycle - GitHub Pull Request, GitLab Merge requests, Gerrit Code, etc.

- Defect Tracking tool - Jira, GitHub Issues, etc.

- Failure triage - tracking user review of test failures.

As a company, our focus is to better enable software development teams to optimize the change-build-test workflow by leveraging all of the data they generate during development. Building a model that adapts, learns, and prescribes in an automated fashion is the focus of Testspace.

Don’t let your data get dropped on the floor

Get setup in minutes!

Try Testspace risk-free. No credit card is required.

Have questions? Contact us.